Train Computer Vision Models to See Through Fog

In the real world, vision doesn’t stop when the weather turns. But for many computer vision models, fog is enough to break perception entirely. The haze that softens the landscape for human eyes becomes a severe challenge for machine vision—reducing contrast, scattering light, and erasing the fine edges that models rely on to make sense of a scene.

At AI Verse, we’ve seen firsthand how these conditions test the limits of even the latest models. Yet, by training models to see through fog—using realistic synthetic environments—the gap between clear and overcast weather can be dramatically narrowed.

When Fog Breaks Vision

Fog does more than blur a picture—it changes the physics of light. Scattering distorts textures and erases shapes, turning once-clear boundaries into ambiguous gradients. A model trained only on clear data may misclassify, miss detections, or lose spatial consistency when deployed in safety-critical conditions such as defense, robotics, or surveillance.

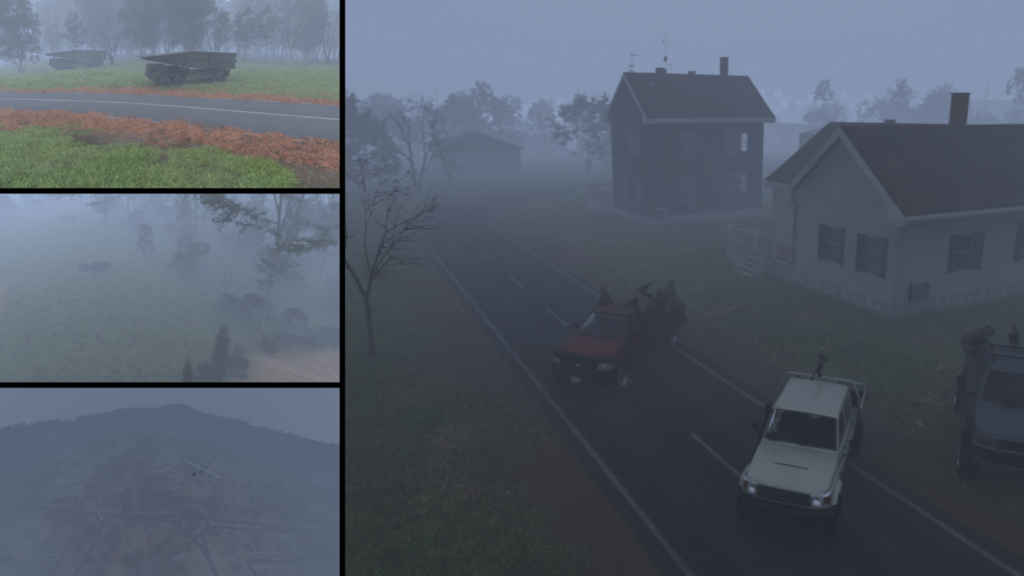

This Clear→Fog domain gap manifests as a sharp drop in accuracy precisely when reliability matters most. Understanding and mitigating this effect is key to building models that can operate safely and autonomously in the world.Labelled images generated by AI Verse procedural engineLabelled images generated by AI Verse procedural engineLabelled images generated by AI Verse procedural engine

Training with Fog: The Path to Robustness

The most consistent finding from years of research is simple: exposing models to fog makes them stronger. When models train or adapt under foggy conditions—synthetic, real, or mixed—they rapidly regain robustness.

Cross-condition adaptation with contrastive objectives helps align features from clear and adverse environments. The result: state-of-the-art segmentation and detection performance even when visibility falls off a cliff.

Synthetic Fog

High-fidelity synthetic fog can outperform scarce real-world data when it’s grounded in physics and scene geometry. Synthetic imagery lets developers render depth-aware haze, control droplet density, and adjust illumination—creating consistent, labeled data across conditions that would be impossible to capture manually.

Studies consistently show that combining synthetic fog with partial real datasets delivers the best generalization. It’s not just simulated data—it’s a systematic strategy to make models weatherproof.

Dehazing With Purpose

Dehazing can help, but only when it serves the downstream task. Task-aware dehazing modules, trained end-to-end with detection or segmentation objectives, can restore cues that matter for recognition. In contrast, visually pleasing dehazing optimized for image quality often fails to translate into better accuracy.

Real deployment demands validation on weather-specific test sets like RTTS or RIS to ensure that improvements are more than cosmetic.

Building Data That Reflects Reality

A balanced datasets to train AI model may include:

- Synthetic image datasets.

- Real fog data, yet these are difficult to obtain.

- Physics-guided synthetic fog, capturing distinct droplet sizes, densities, and lighting conditions.

- Depth-aware rendering that preserves geometry and specular reflections.

This approaches expand coverage of the edge cases that are critical for autonomous systems and drones, especially in changing weather.

Evaluating Model’s Robustness

Evaluate not just on clear-weather benchmarks but in fog chambers—adverse-weather test suites that reveal real-world performance gaps. Track visibility-dependent metrics: small-object recall, edge fidelity, and fog-density response.

Favor architectures and pre/post-processing steps if they improve mission-critical performance under fog, not just overall mAP scores.

How AI Verse Helps

AI Verse’s procedural engine is purpose-built for generating any scenario. Our software generates foggy environment on-demand in hours to reflect real-world conditions. Every pixel comes with labels ready to train computer vision models for segmentation and detection.

Teams use these capabilities to conduct Clear→Fog adaptation experiments, stress-test their models, and generate custom fog edge cases at scale. The result is a repeatable, data-driven pathway to reliable computer vision under any weather.

Seeing Beyond the Fog

Synthetic data is not a substitute for reality—it’s a way to recreate it with precision. By modeling fog and its impact on vision under controlled, measurable conditions, synthetic imagery gives engineers something that the real world rarely provides: repeatability, coverage, and ground truth.

When used to bridge environmental gaps, such as the Clear→Fog divide, synthetic images become more than training material—they become instruments of resilience. They allow perception systems to learn from conditions that may never occur twice in exactly the same way, transforming unpredictability into preparedness.

With synthetic scenes, computer vision models can see what was once hidden—enabling safer, more reliable autonomy across defense, security, and robotics.