Synthetic Data for Machine Learning: Train Smarter CV Models Without Real-World Images

Synthetic data for machine learning is artificially generated, fully-labeled training data that replicates real-world scenarios without capturing a single real-world frame. AI Verse specializes in photorealistic synthetic image datasets for computer vision: generated in 4 seconds per image, with 8 annotation types, across any environment. Purpose-built for defense, security, and robotics teams that demand precision training data without real-world data bottlenecks.

Say Goodbye To Computer Vision Data Bottleneck!

Trusted by defense organizations and drone manufacturers

Your CV Model Is Only as Good as Your Training Data. And Real-World Data Is Failing You.

Collecting and labeling real-world image data is slow, expensive, privacy-sensitive, and dangerously sparse at the edge cases that matter most: rare threat scenarios, unusual lighting, uncommon object configurations.

GenAI tools promise a shortcut. But when your model’s job is detecting a drone, a weapon, or a person in danger, you cannot afford hallucinated pixels or physically unrealistic scenes.

There’s a better way.

Why Leading Defense, Security & Autonomy Teams Choose AI Verse Synthetic Data

1.

The World’s Fastest Labeled Synthetic Image Generator

4 seconds. Fully labeled. One GPU.

AI Verse produces a complete, annotated synthetic image in 4 seconds on a single GPU and scales to 10 GPUs in parallel. No competitor publicly matches this claim. What used to take days of manual annotation now takes hours of automated generation.

The result: Your team iterates faster, ships models sooner, and spends budget on insight, not grunt work.

2.

Two Procedural Engines = One Complete World

Most synthetic data platforms handle indoor and outdoor environments with a single, compromise-driven tool.

AI Verse doesn’t compromise.

- HELIOS — purpose-built for indoor environments

- GAIA — purpose-built for outdoor environments: urban streets, military terrain, open airspace, perimeter zones

Each engine is optimized for the physics, lighting, and object diversity of its domain. The result is training data with domain-specific fidelity that generalist platforms simply can’t match.

3.

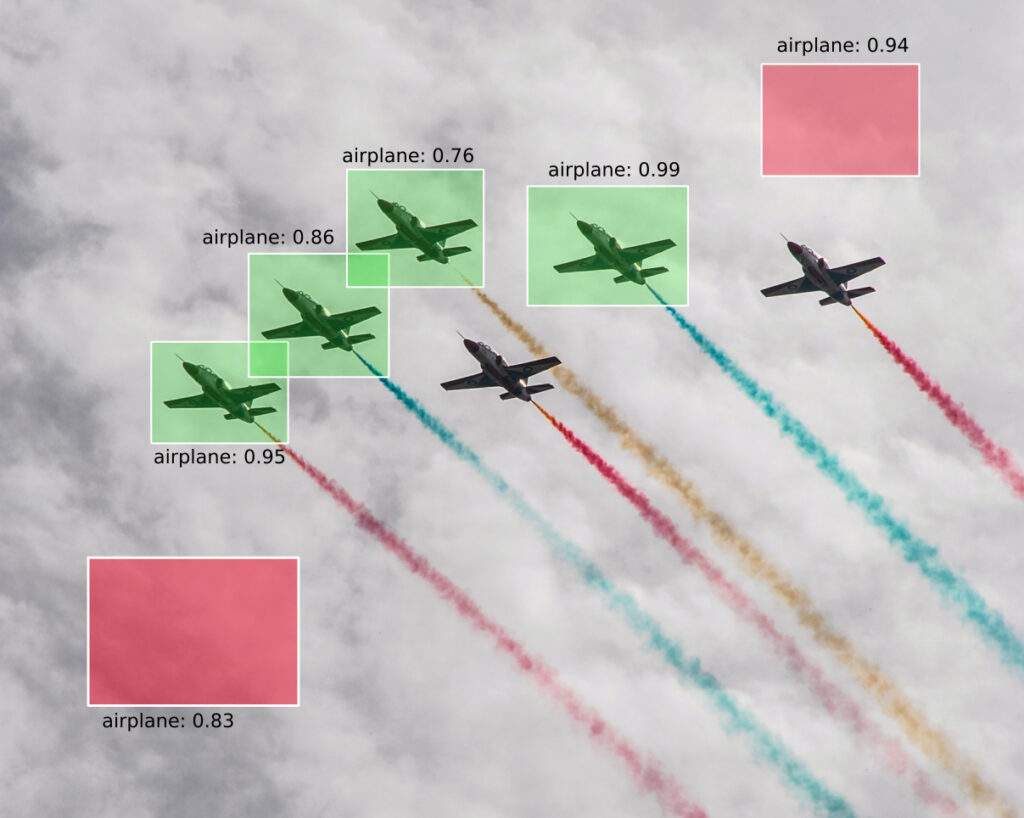

8 Pixel-Perfect Labels. Automatically. Every Time.

One image. Eight annotation types. Zero manual effort.

No other platform in this space publicly offers this breadth of simultaneous auto-annotation as a headline feature. Every label is generated programmatically; no human labelers, no inconsistency, no fatigue errors.

4.

Procedural, Not Generative. Physics First, Always.

AI Verse is not a GenAI image generator wearing a data label.

Our procedural engine builds scenes from parameterized rules: object placement, sensor angles, lighting conditions, occlusion patterns; giving you complete physical realism and full parameter control via an intuitive menu interface. No prompting. No guessing. No hallucinations.

“Generative AI models can memorize and reproduce real-world training data artifacts. Procedural synthesis cannot; it generates from rules, not memories.”

If your model will be deployed in a high-stakes environment, your training data must be built on ground truth. That’s AI Verse.

5.

The Only Synthetic Data Platform Purpose-Built for C-UAS and Defense

AI Verse is one of a small number of synthetic data providers that explicitly supports defense applications and the only one to name Drone Detection (C-UAS), Military Vehicle Detection, and Weapon & Threat Detection as tested use cases.

- Fully synthetic pipeline: no sensitive real-world imagery ever enters the training loop

- EU-based, aligned with European defense procurement and data sovereignty requirements

From Parameters to Pixel-Perfect Dataset In Hours!

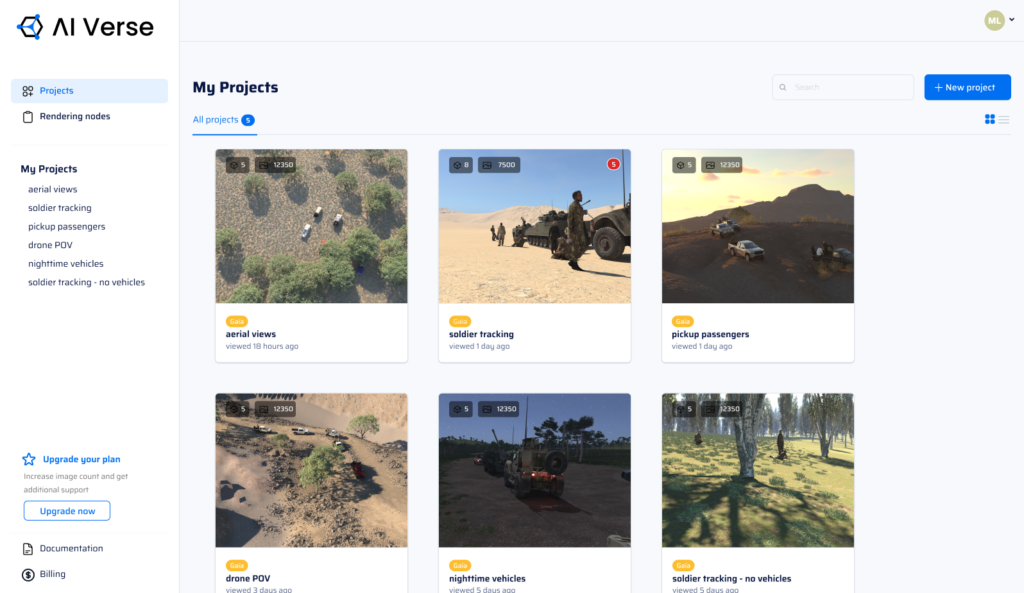

Step 1.

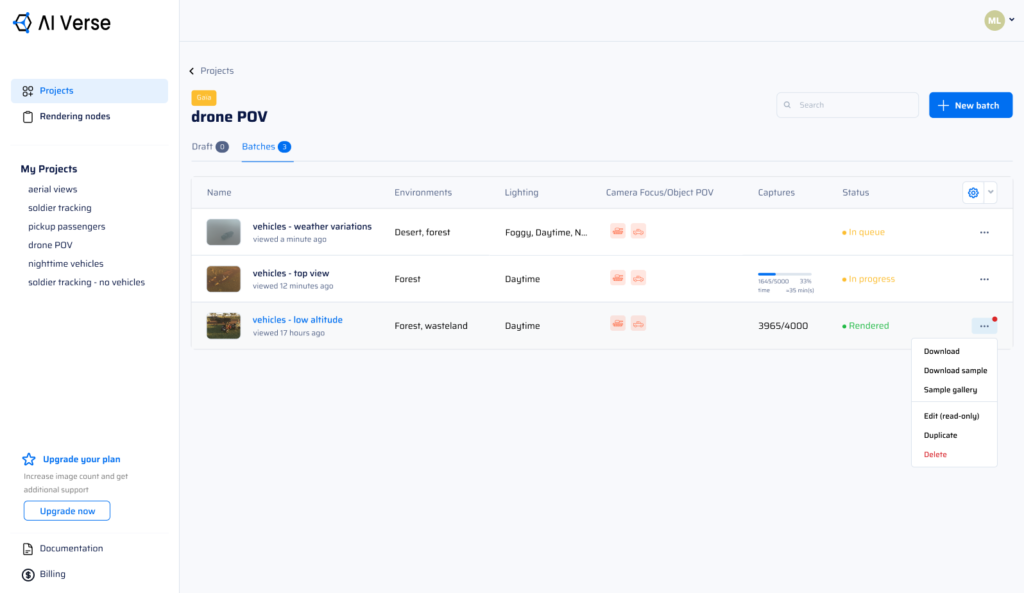

Create a Project

and Configure Your First Batch

Create a project and add your first batch. You can add as many batches as you want to each project.

Step 2.

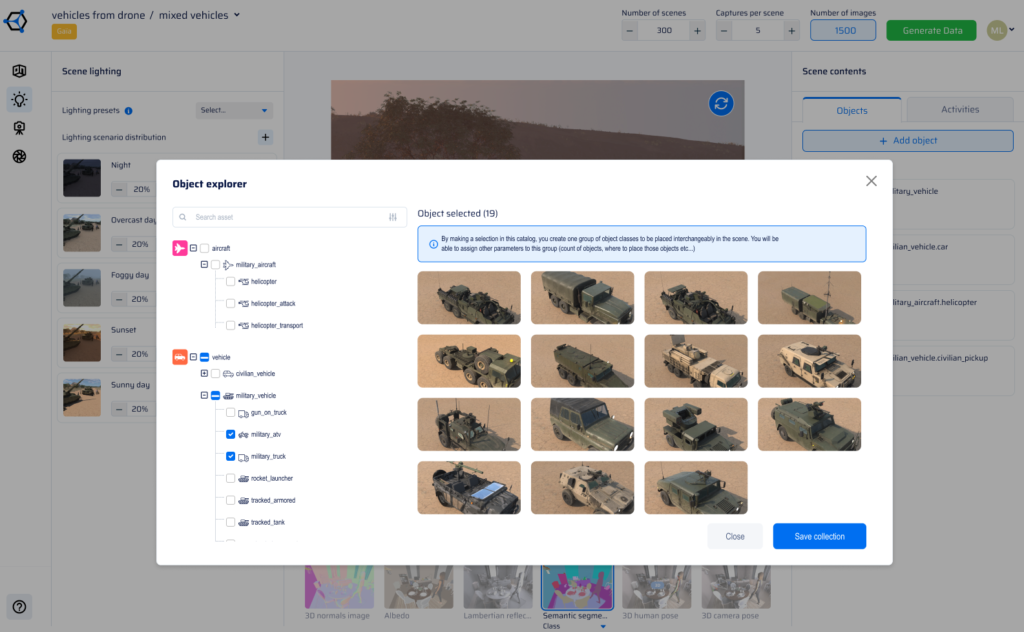

Build Your Scene

and Select Objects of Interest

Select the type of environment you need. Add specific objects of interest from a catalog with 3D assets. Your objects of interest are automatically added to each scene.

Step 3.

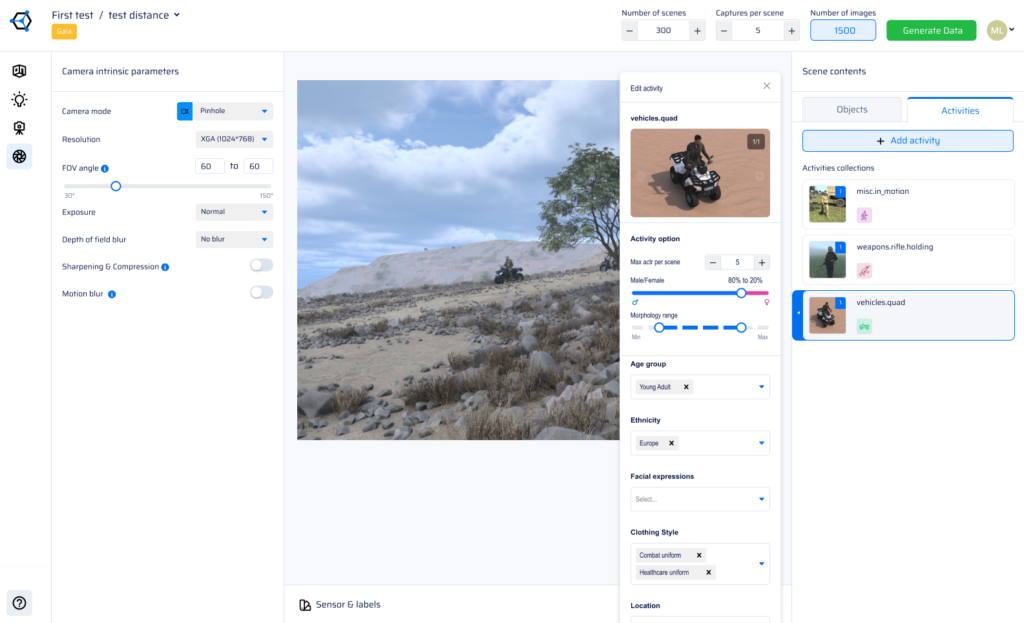

Define Activities and Physical Attributes

Select the activities you are interested in. Set various parameters related to the characters you are adding such as age, gender, physical characteristics, ethnicity, etc.

Step 4.

Apply Lighting Conditions from Natural to Artificial

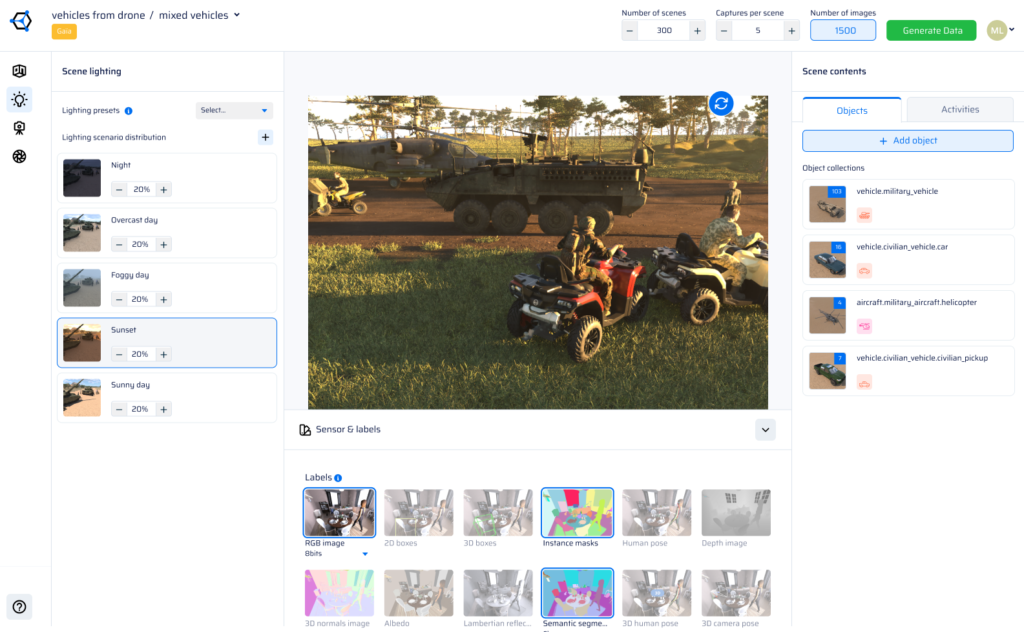

For each batch, select several lighting scenarios from a catalog including various artificial and natural lighting conditions. You can even simulate pictures taken with a flash if desired.

Step 5.

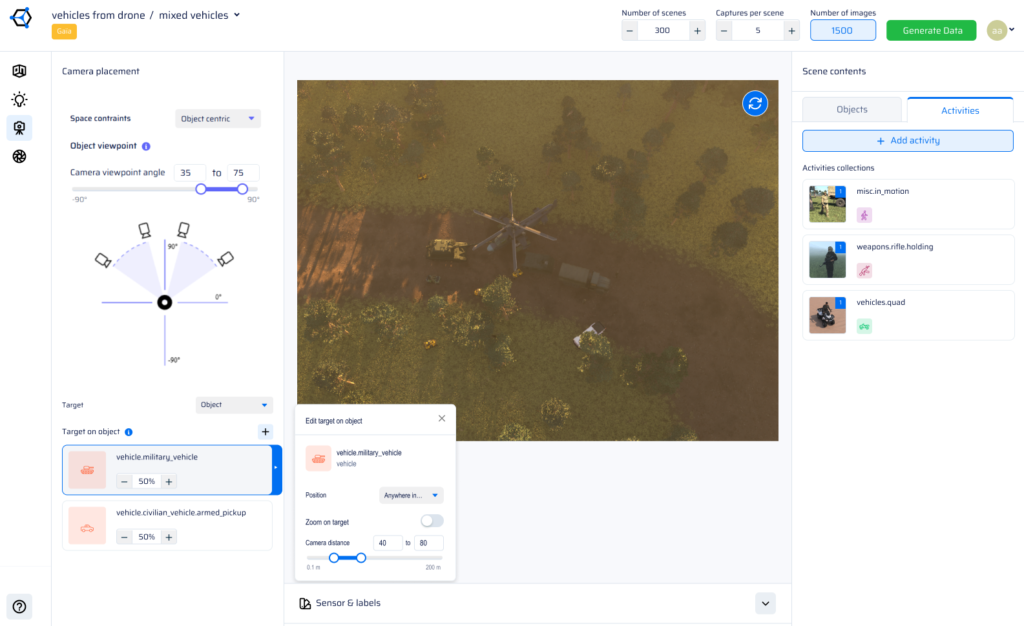

Match Camera Parameters to Your Real Sensor Setup

Set your camera’s intrinsic and extrinsic parameters to match your use case. For example, simulate images from a fixed surveillance camera, a drone, satellite image.

Step 6.

Choose Annotation Labels and Generate Your Labelled Dataset

Select the labels you need among instance and semantic segmentation, depth image, 3D normal image, albedo image, Lambertian reflectance model, or skeleton key points. Next, choose the number of scenes and images per scene. Then, generate your fully labeled dataset.

Built for the Missions Where Errors Are Not an Option

Counter-UAS & Drone Detection

Train detection models on thousands of synthetic drone configurations, flight altitude, flight paths, and lighting scenarios, including edge cases too rare or dangerous to capture in the field.

Military Vehicle & Weapons Detection

Generate diverse, physics-accurate scenes of military hardware in varied terrain, lighting, and occlusion conditions. No sensitive real-world data required.

Smart Security & Surveillance

Detect abandoned luggage, anomalous behaviour, and access violations with models trained on dense synthetic indoor scene variation from the HELIOS engine.

Autonomous Navigation & Robotics

Build obstacle detection and path-planning models that generalize across environments, powered by GAIA’s outdoor procedural diversity.

Human Posture

Train posture and activity classifiers using AI Verse’s skeleton and keypoint labels: fall detection, crouching, unauthorized entry, and more.

Synthetic Data for Computer Vision: AI Verse Procedural Tech vs Gen AI & Manual Labelling

| AI Verse | Typical GenAI Tool | Generic Labeling Platform | |

|---|---|---|---|

| Generation speed | 4s/image | Variable | N/A (manual) |

| Label types per image | 8 auto | 0 | Task-specific |

| Indoor engine | HELIOS (dedicated) |  |  |

| Outdoor engine | GAIA (dedicated) |  |  |

| Physics-accurate (no hallucinations). |  |  Risk Risk |  |

| Defense / C-UAS use cases |  |  |  |

| Privacy (no real data required) |  Fully synthetic Fully synthetic |  |  |