How Synthetic Images Power Edge Case Accuracy in Computer Vision

Edge cases in computer vision are rare, atypical, or safety-critical scenarios that AI models fail to detect reliably because they appear too infrequently in real-world datasets — a camouflaged vehicle in fog, a pedestrian emerging at night, or a partially occluded object. Synthetic image generation makes it possible to produce and annotate these rare scenarios at scale, on demand.

What are edge cases in computer vision?

This creates a critical gap: AI models may achieve high accuracy on benchmarks but fail in the exact conditions where precision matters most — typically in defense, security, or autonomous driving applications.

Synthetic image datasets address this directly. A procedural generation engine can produce thousands of annotated variants of any edge case — varying lighting, weather, occlusion, and camera angle — on demand. The result is a model trained to handle the unexpected before it encounters it in deployment.

In computer vision, the greatest challenge often lies in the unseen. Edge cases—rare, unpredictable, or safety-critical scenarios—are where even state-of-the-art AI models struggle. Whether it’s a jaywalker emerging under low light, a military vehicle camouflaged in complex terrain, or an anomaly appearing in thermal drone footage, these moments can derail performance when not represented in training data.

Synthetic imagery is closing that gap.

By enabling precise control, automated annotation, and scalable generation of rare events, synthetic data is redefining how machine learning models learn to navigate the unexpected.

Why Do Edge Cases Matter for Computer Vision Models?

AI models are only as robust as the data they’re trained on. When rare but critical scenarios are underrepresented—or missing entirely—model behavior becomes fragile and unreliable, particularly in high-stakes domains like defense, surveillance, and healthcare.

Edge cases are:

- Rare and hard to capture

- Logistically expensive and slow to collect

- Often privacy-sensitive

- Crucial to safety and generalization

Real-world datasets often fall short, offering only limited coverage of the variability, complexity, and label precision needed for edge case training. Synthetic image generation, on the other hand, excels in this domain.

What Are the Key Benefits of Synthetic Images for Edge Cases?

1. Generation of Rare Scenarios

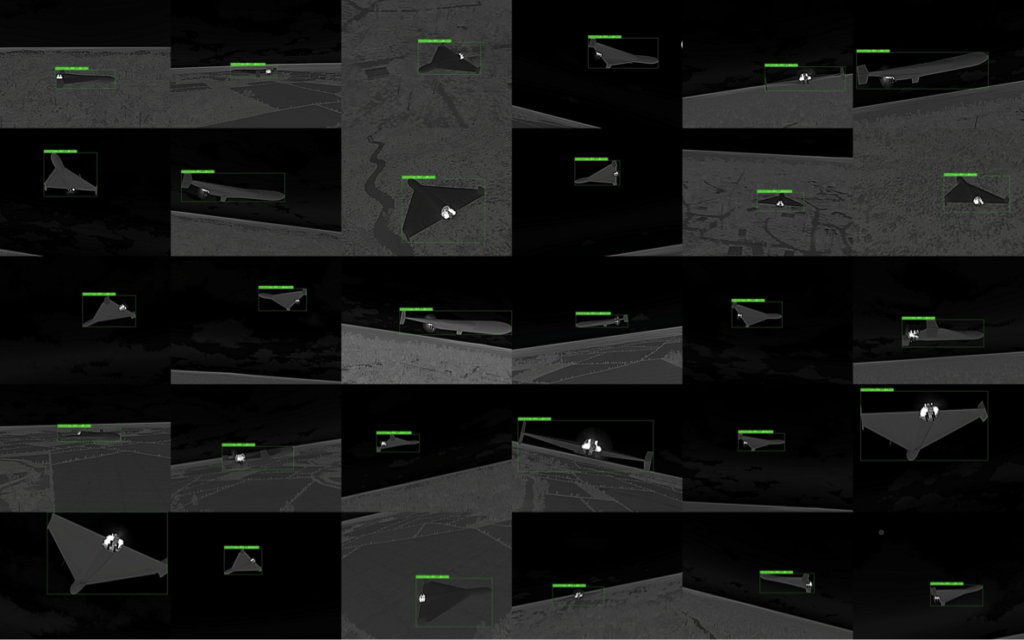

Procedural engines like AI Verse Gaia can generate edge-case conditions on demand—ranging from nighttime surveillance and sensor occlusions to infrared drone views in stormy weather. This ensures your models are exposed to the rarest examples, consistently and at scale.

2. Accelerated, Cost-Effective Data Collection

Collecting real-world data for edge cases—like vehicle detection in foggy weather or various object occlusions—is slow, costly, and often unsafe. Synthetic image generation significantly reduces the time needed to obtain data, with no field deployment or manual annotation required.

3. Built-In Privacy and Compliance

Synthetic data is inherently free of personally identifiable information (PII), making it compliant with GDPR and ideal for surveillance, defense, and other sensitive applications where privacy is paramount.

4. Full Control Over Visual and Contextual Variables

Scene components such as lighting, object position, occlusion, motion blur, and environment can be precisely controlled or randomized, ensuring comprehensive training coverage. The high variability of such generated images further enhances the generalization of computer vision models.

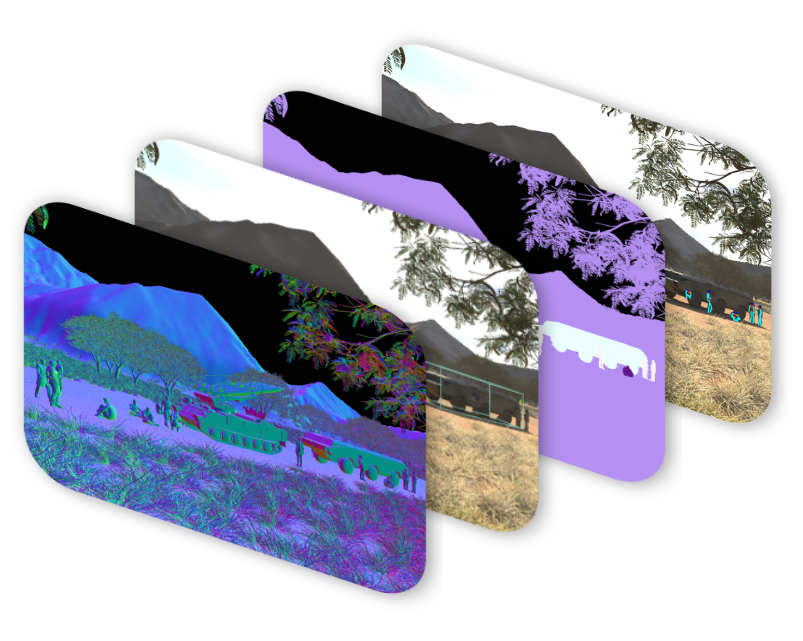

5. High-Fidelity, Pixel-Perfect Datasets

Manual annotation is error-prone and expensive—especially in pixel-level tasks like segmentation. Synthetic datasets come with automatically generated labels (bounding boxes, segmentation masks, depth maps, etc.), reducing label noise and accelerating training cycles.

How Do You Close Edge Case Gaps with Synthetic Image Generation?

The synthetic data generation process for edge case modeling begins by identifying failure points in your existing model—often via error analysis or model explainability tools. Common gaps include:

- Rare object poses or interactions

- Uncommon lighting or weather conditions

- Sensor anomalies (thermal noise, lens flare)

- Obscured or occluded targets

Once identified, computer vision engines can generate thousands of controlled, labeled images simulating these conditions. These images are then integrated into model training, either standalone or as part of a hybrid dataset, reducing false positives and boosting robustness.

Example: A defense contractor used synthetic thermal imagery to simulate vehicle detection under foggy, low-light conditions. After integrating 12,000 synthetic samples into their training set, the model’s precision improved by 21% on real-world nighttime test scenes.

What Should You Do Next?

The shift toward synthetic data is accelerating as AI safety regulations increasingly favor privacy-compliant, synthetic datasets.

Furthermore, as the complexity of AI models grows, synthetic data is evolving from an R&D supplement to a necessity. For edge cases, it offers excellent benefits in coverage, control, and compliance.

At AI Verse, we partner with teams across defense, robotics, and the drone industry to help them simulate diverse scenarios—and train AI models that perform when it counts.

Frequently Asked Questions

How many synthetic images are needed to cover edge cases effectively?

There is no universal number — it depends on the edge case rarity and the model accuracy gap. Teams typically start by generating 2,000–10,000 synthetic variants of a single edge case scenario (varying lighting, weather, angle, and occlusion), then validate accuracy improvement on a held-out test set. The iterative approach — generate, train, test, expand — is more effective than trying to anticipate volume upfront.

Do synthetic edge case images replace real-world data entirely?

No — best practice is to use synthetic edge case images as a complement to real-world data, not a replacement. Real data provides the distribution baseline; synthetic data fills the gaps where real-world collection is impractical or dangerous. A hybrid training set typically outperforms either source alone, because the model learns both the common cases from real imagery and the rare-but-critical cases from synthetic generation.

What edge cases are most commonly missed by computer vision models?

The most commonly missed edge cases fall into four categories: adverse weather (heavy rain, dense fog, snow glare), unusual lighting (direct sun, deep shadow, IR-only visibility), unusual object states (partial occlusion, atypical angles, physical damage), and domain-specific scenarios (camouflage, thermal signatures, multi-sensor fusion). All four are chronically underrepresented in standard real-world datasets because they occur infrequently and are difficult or dangerous to capture safely.