Common Myths About Synthetic Images – Debunked

Synthetic images are computer-generated photographs created by procedural or AI-based rendering engines rather than physical cameras. In computer vision, synthetic images serve as training data — delivering pixel-perfect annotations, controlled scene variation, and unlimited volume without the cost or privacy constraints of real-world data collection.

What are synthetic images?

In computer vision, synthetic images are primarily used as training data for machine learning models. Each image comes with automatically generated ground truth annotations — bounding boxes, segmentation masks, depth maps, and keypoints — eliminating the cost and time of manual labeling.

The practical appeal is clear: a team can generate tens of thousands of precisely annotated images overnight, covering scenarios that are rare, dangerous, or impossible to capture in the real world. Used alongside real data, synthetic images consistently improve model accuracy and reduce time to deployment.

Despite the rapid advances in generative AI and simulation technologies, synthetic images are still misunderstood across research and computer vision industry. For computer vision scientists focused on accuracy, scalability, and ethical AI model training, it’s essential to separate facts from fiction.

We work with organizations that depend on data precision—from defense and security applications to autonomous systems. And we’ve heard all the myths. Let’s break them down.

Myth 1: Synthetic Images Are Always Low-Quality or Unusable

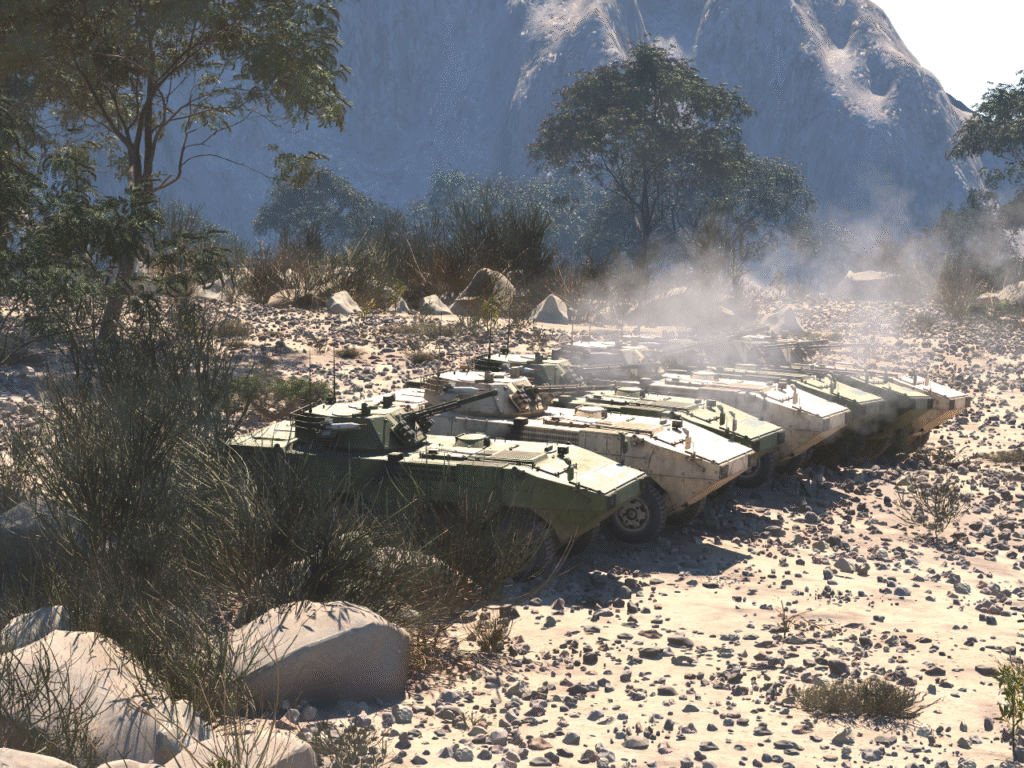

Reality: This might have been true a decade ago. But today’s generative pipelines—powered by robust procedural generation—can produce photorealistic images at scale. Many are indistinguishable from real-world photos and include pixel-perfect annotations. Quality depends on the tools, not the concept or an old assumptions about synthetic imagery generation.

Myth 2: Synthetic Images Are Unoriginal

Reality: Not all generative models are trained to mimic existing images. In fact, synthetic datasets can be fully original, especially when built in procedural engine with settings selected by users. Well-designed procedural systems simulate realistic object co-occurrence, spatial arrangements, and environmental variability.

Myth 3: Synthetic Image Generation Technology is Uncomplicated

Reality: While the software used for data generation is user-friendly, behind every robust synthetic dataset is a team of experts: 3D artists, data scientists, simulation engineers. Producing meaningful, balanced, and domain-specific images takes careful design at the software level. For example in order for a user to be able to click “generate” with AI Verse procedural engine— an entire team of 3d artists, animation artists and computer vision specialists works on development of the technology that will meet the highest norms in for example defense industry.

Myth 4: Synthetic Images Are Out of Control and Unpredictable

Reality: Modern generation workflows like procedural generation offer control over every variable—from camera angle and lighting to object type, and motion. Present-day image outputs can be highly repeatable and realistic. The era of “random AI art” is long gone.

Myth 5: Synthetic Images Are Unethical

Reality: Like any tool, synthetic imagery can be misused—but it can also solve real ethical challenges. For example, privacy-preserving datasets built from synthetic faces or vehicle scenes eliminate the need for personal data. With proper guardrails, synthetic generation is a force for ethical AI.

Myth 6: Synthetic Images Are Useless for Real Applications

Reality: Synthetic doesn’t mean fake—it means engineered. These datasets can be designed to reflect the statistical properties of real-world environments and are already used to train object detection models, and various other computer vision models across industries. It’s not a placeholder. It’s a valid training data.

Myth 7: Models Can’t Be Trained Solely on Synthetic Images

Reality: Pure synthetic training is not only possible—it’s working. Many models in robotics, defense, and AR/VR are bootstrapped entirely from generated images. Synthetic-first pipelines, often followed by domain adaptation or fine-tuning, are replacing traditional data collection in cost-sensitive and safety-critical areas and making it possible for model training in the areas where real-world data is impossible to collect.

Myth 8: Synthetic Images Are Expensive

Reality: With the right infrastructure, synthetic image generation can be faster and cheaper than manual data collection and labeling. And it scales infinitely. Compared to field data collection, especially in hazardous or restricted environments, synthetic is often the most efficient path forward.

Conclusion

Synthetic image generation is no longer experimental—it’s foundational. For computer vision scientists building robust, scalable, and ethical AI systems, understanding the real capabilities (and limitations) of synthetic data is essential.

At AI Verse, we specialize in producing high-fidelity synthetic image datasets tailored to your training objectives—so you can build better models with fewer compromises.

Frequently Asked Questions

What is synthetic data in computer vision?

Synthetic data in computer vision refers to fully annotated images generated by software rather than captured by physical cameras. A procedural rendering engine simulates real-world scenes — controlling lighting, object placement, sensor type, and environmental conditions — and outputs the image alongside its complete ground truth annotation simultaneously. This eliminates manual labeling and makes it possible to produce large, precisely annotated datasets at machine speed.

How does synthetic data improve model performance?

Synthetic data improves model performance primarily by closing distribution gaps — the difference between what a model was trained on and what it encounters in deployment. Because synthetic generation is fully controllable, teams can deliberately produce data for underrepresented scenarios: specific lighting conditions, camera angles, object states, and edge cases. Models trained on synthetic-augmented datasets consistently show improved performance on out-of-distribution inputs compared to models trained on real data alone.

Is domain gap still a problem with modern synthetic data?

Domain gap — the visual difference between synthetic renders and real photographs — is a real but shrinking problem. Physics-based rendering engines that simulate sensor optics, lens distortion, atmospheric scattering, and material reflectance produce images visually close to real-world output. When annotation quality is high and training data covers the full range of deployment conditions, models generalize well to real-world inference even when trained predominantly on synthetic imagery.